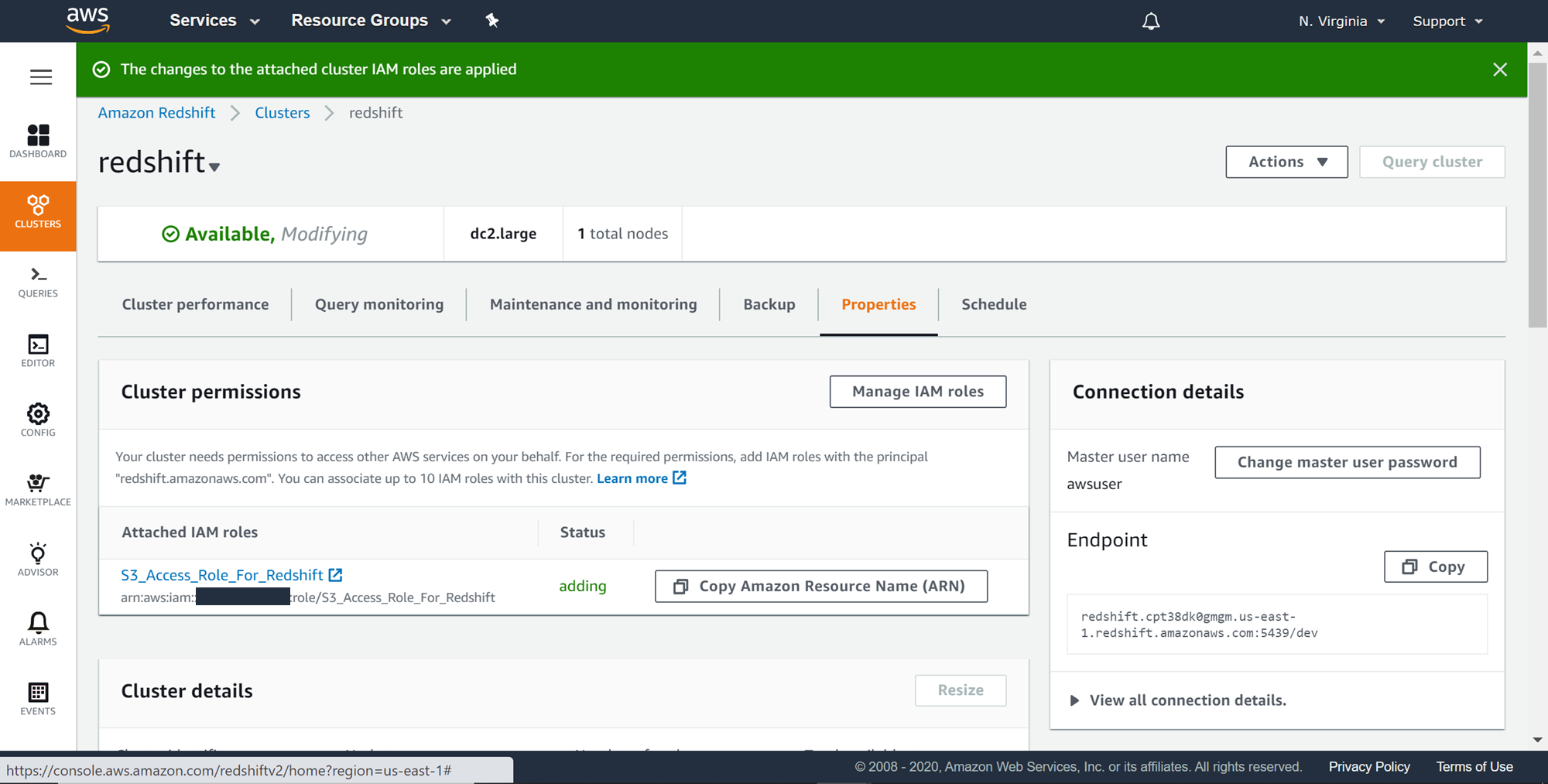

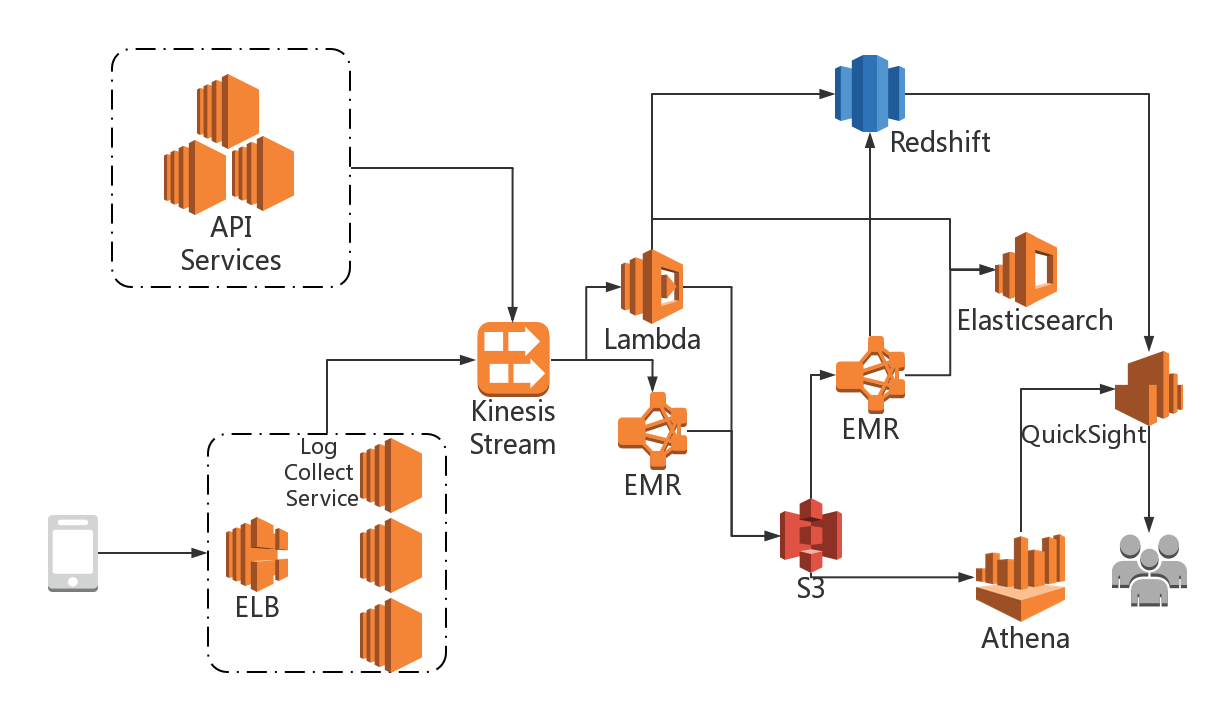

After you create the IAM role, attach a policy that grants permission to the bucket. unload (' SELECT col1, col2, col3, currentdate as partitionbyme FROM dummy ' ) to 's3://mybucket/dummy/' partition by (partitionbyme) iamrole 'arn of IAM role' kmskeyid 'arn of kms key' encrypted FORMAT AS. The COPY command supports downloading JSON, so I'm surprised that there's not JSON flag for UNLOAD. Redshift unload gives an option to load the data in a by partition. As you create the role, select the following:įor Select type of trusted entity, choose AWS service.įor Choose the service that will use this role, choose Redshift.įor Select your use case, choose Redshift - Customizable.ģ. Is there any way to directly export a JSON file to S3 from Redshift using UNLOAD I'm not seeing anything in the documentation ( Redshift UNLOAD documentation ), but maybe I'm missing something. From the account of the S3 bucket, open the IAM console.Ģ. Resolutionįrom the account of the S3 bucket, create an IAM role with permissions to the bucket:ġ. Important: This resolution doesn't apply to Amazon Redshift clusters or S3 buckets that use server-side encryption with AWS Key Management Service (AWS KMS). From the Amazon Redshift cluster, run the UNLOAD command using the cluster role and bucket role. Update the bucket role to grant bucket access, and then create a trust relationship with the cluster role.Ĥ. From the account of the Amazon Redshift cluster, create another IAM role with permissions to assume the bucket role. From the account of the S3 bucket, create an IAM role with permissions to the bucket. Follow these steps to set up the Amazon Redshift cluster with cross-account permissions to the bucket:ġ. So its important that we need to make sure the data in S3 should be partitioned. In BigData world, generally people use the data in S3 for DataLake. Redshift unload is the fastest way to export the data from Redshift cluster. To get access to the data files, an AWS Identity and Access Management (IAM) role with cross-account permissions must run the UNLOAD command again. RedShift Unload to S3 With Partitions - Stored Procedure Way. Therefore, when Amazon Redshift data files are put into your bucket by another account, you don't have default permission for those files. This is true even when the bucket is owned by another account.

The following example unloads the VENUE table and writes the data to s3://mybucket/unload/. By default, an S3 object is owned by the AWS account that uploaded it. Suppose, following the AWS docs I'd like to use an unload command like. Unload VENUE to a pipe-delimited file (default delimiter).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed